In a previous article, I showed how to ingest data inline, listing each individual row to ingest. For large datasets, this is not a practical way to initialize data in a table. Another option is to ingest from CSV data stored in an Azure Storage Blob.

The syntax is

.ingest into table tablename

(

h'blob_url_and_sas_token'

)

where:

- tablenameis the name of the ADX table into which you want to ingest data

- blob_url_and_sas_token is the URL of blob, followed by the SAS token of the Azure Storage Account containing that blob. There are no spaces between the 2 strings.

Each row in the CSV file will create one row in the ADX table. The format of the CSV file must match the schema of the ADX table.

Demo

For the demo below, we will use a table defined by the following ADX code:

.create table testdata(

timeStamp: datetime,

someNumber: long,

someString: string,

someJson: dynamic

)

and we will create a blob containing the following text:

2022-02-08T21:25:04.811Z,1,foo1,{'foo':'bar1'}

2022-02-08T21:25:07.838Z,2,foo2,{'foo':'bar2'}

2022-02-08T21:25:10.912Z,3,foo3,{'foo':'bar3'}

2022-02-08T21:25:13.829Z,4,foo4,{'foo':'bar4'}

2022-02-08T21:25:15.415Z,5,foo5,{'foo':'bar5'}

Blob and Storage Account Configuration

In an Azure Storage Account, create a blob container and upload to the blob container a file containing the comma-delimited text above.

NOTE: For more information on working with Azure Storage Accounts and Blobs, see this article

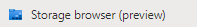

After uploading the blob file, you can the Storage Browser browser blade by clicking the [Storage Browser] button (Fig. 1) in the left menu; then, navigating to the container containing your import CSV file, as shown in Fig. 2.

Fig. 1

Fig. 2

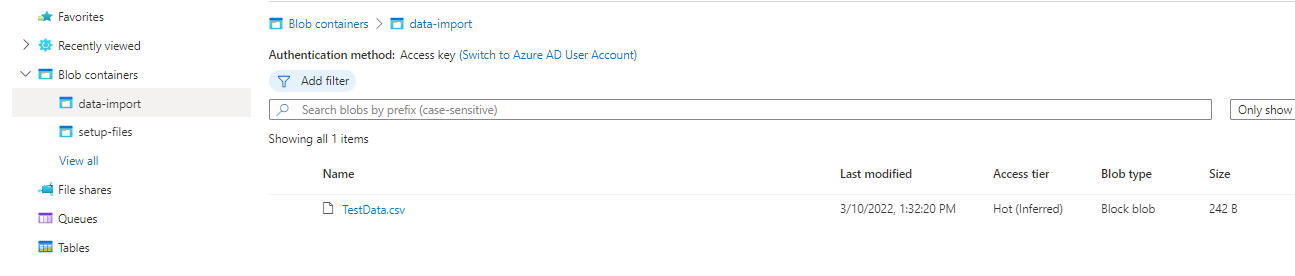

Click your import file blob in the list to view the properties, as shown in Fig. 3.

Fig. 3

Copy the URL of this file and save it for later.

Next, generate an SAS Token for the storage account. In the left menu, under the "Security + networking" section (Fig. 4), click the [Shared access signature] button (Fig. 5)

Fig. 4

Fig. 5

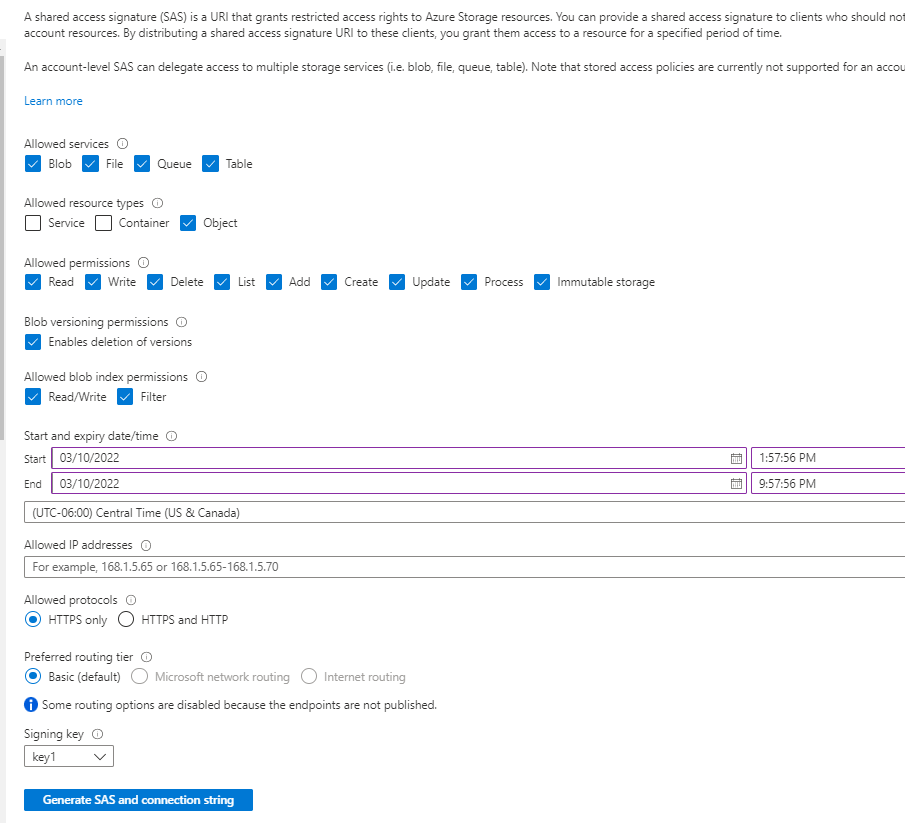

The SAS dialog displays, as shown in Fig. 6.

Fig. 6

At the "Allowed resource types", check the "Object" checkbox.

The valid start and end date times default to the current datetime through 8 hours from now. Adjust these if you want to access the blob beyond the end time or will not begin the import until significantly later.

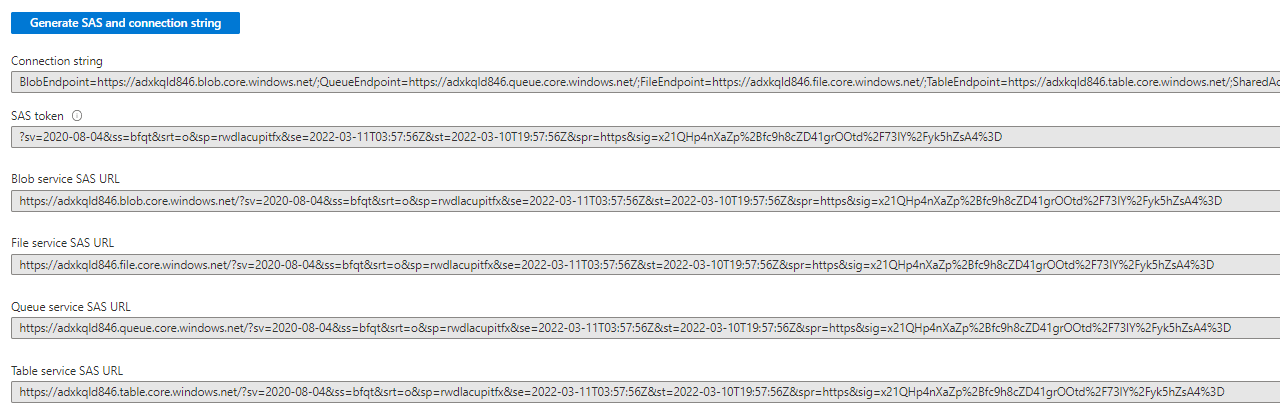

Click the [Generate SAS and connection string] button to generate a SAS token. Generated data will display below the button, as shown inf Fig. 7.

Fig. 7

Copy the value in the SAS token field and save it for later.

Ingesting Data into ADX Table

Open the Azure Data Explorer interface, log in and select the database containing your import table.

From here, execute the ingest command described above. For our sample, this is:

.ingest into table testdata

(

h'https://dgteststorage.blob.core.windows.net/data-import/TestData.csv?sv=2020-08-04&ss=bfqt&srt=o&sp=rwdlacupitfx&se=2022-03-11T03:57:56Z&st=2022-03-10T19:57:56Z&spr=https&sig=x21QHp4nXaZp%2Bfc9h8cZD41grOOtd%2F73lY%2Fyk5hZsA4%3D

)

You should now be able to query the table with its data via the following KQL command:

testdata

Troubleshooting

If data is not imported, error information can be retrieved via the following command:

.show ingestion failures

| order by FailedOn

Look at the row matching the time of the import (most likely the most recent row). Common issues are an invalid or expired SAS token and data that does not match the table schema.

Other Data Formats

ADX supports ingestion from other file formats than CSV. For example, Parquet, Avro, and JSON files can also be ingested. You can find a complete list here.